Lab Performance at the Head of the Class, Says Review

Mopping up Cholesterol By Copying Natures Way

Lab Performance at the Head of the Class, Says Review

In only its first year of existence, the new Department of Energy Contractor Performance Appraisal Process already had the quality bar virtually set by one of its laboratories. And if not for a slightly lower rating for Environmental, Health and Safety, Berkeley Lab would have gotten straight A’s.

As it was, the Lab’s fiscal year 2006 performance as rated by the University of California, and verified by the DOE Office of Science, was the head of the class among the 10 laboratories participating in the process. In eight different categories of science and operations, Berkeley Lab received seven A-grades and one B-plus.

This was not grade inflation, according to Berkeley Site Office Director Aundra Richards, who managed the local assessment process for the DOE. It was, pure and simple, a laboratory accomplishing its mission at an extremely high level.

“What led to this result was focused leadership, and a lot of smart work and hard work by employees throughout the Lab,” she said. “Director Steve Chu and his senior leadership team have been highly committed to excellence across the board. Lab COO David McGraw has been working to raise the caliber of laboratory operations to the same high level of performance that has long characterized the research programs. The new Office of Assurance, led by Jim Krupnick, also played a key role by tracking all contract-related commitments.”

In her presentation to the Office of Science assessment board in February, Richards provided the persuasive evidence that supported the grades. Science management and productivity ratings were uniformly high, led by across-the-board A-pluses for advanced scientific computing research. Lab security and emergency systems received the top grade in operations, followed closely by Lab leadership and financial management.

“Everyone can take justifiable pride in the FY2006 performance accomplishment,” Richards added. “It is a fitting tribute to this historical institution in its 75th year.”

Director Chu echoed this theme. “Without the hard work and constant focus on excellence by a lot of people in many different areas, the results could have been much different. I hope each one of our employees are taking pride in their contribution to this accomplishment,” he said.

Two years ago, the Office of Science decided to institute a common evaluation and grading structure for all of its labs. Appraisals are now done in eight performance categories — mission accomplishment; construction and operations of research facilities; science and technology program management; lab leadership and stewardship; safety, health and environmental protection; business systems; facility and infrastructure management, and security and emergency management.

At stake in this exercise is not just reputation and report cards. The available fee earned by a contractor is awarded based upon overall scores. Thus Berkeley Lab earned 97 percent of its available fee for the science and technology (S&T) scores, and 100 percent on the management and operations (M&O) side. That translates to more than $4.3 million of unrestricted funds that UC can apply to more research.

Even more significant, the Lab maintains its qualification for a non-competed contract extension with the University of California. Based on formulas developed within the Lab’s operating contract, performance goals were met in its first two years of the new agreement, and if goals in FY07 are similarly achieved, Berkeley Lab will be awarded three additional years as a UC-managed lab.

That’s a big “if,” according to Berkeley Lab Director of Institutional Assurance Jim Krupnick.

“We have to get at least a B-plus in each of the M&O categories and at least an A-minus in the S&T areas,” Krupnick said, noting that at this point – halfway through FY07 – the Laboratory lags in at least one area, safety statistics. Berkeley was the only one of the five multi-program labs to achieve its safety goals in FY06, but the Office of Science tightened its goals for this year, and Berkeley Lab’s numbers in accidents and injuries are higher than the new targets.

Similarly, property management grades could be problematic unless the Lab’s wall-to-wall inventory being conducted this year accounts for all assets.

So when you’re ranked at the top, it’s a special challenge to stay there.

“There are still improvements to be had,” Krupnick said. “The Director and the Chief Operations Officer have it as a goal to maintain world-class science and excellence in operations. And there’s no reason why we can’t do it again.”

“Going forward, it will be a challenge to maintain this leadership position as other labs will strive to improve,” added BSO’s Richards. “Keep up the great work, but don’t relax. I encourage all Lab employees to do their part to help LBNL continue to set the DOE ‘gold standard’ for world-class research and operational excellence.”

Mopping up Cholesterol By Copying Natures Way

Hoping to take a page from nature’s playbook, a multi-institutional team of researchers led by Berkeley Lab biochemist John Bielicki has learned how a particle that sweeps cholesterol from the body forms in the arteries. Their goal is to create a therapy that jumpstarts this process in people who suffer from atherosclerosis, a life-threatening disease in which the blood vessels that feed the heart become clogged.

“We want to devise a therapeutic that mimics how nature keeps the arteries clear of cholesterol,” says Bielicki of the Lab’s Life Sciences Division.

Bielicki

They’re not there yet. A successful drug is still years away. But Bielicki’s team has completed the necessary groundwork. In a pair of recently published studies, they reveal in unprecedented detail how the cholesterol-scouring particle, the so-called good cholesterol also known as high-density lipoprotein (HDL), is assembled.

At the heart of this process are two proteins present in the blood called apolipoprotein E and A-I. These proteins bind with another protein, called ABCA1, which resides in the membranes of certain cells that line arterial walls. When these proteins bind, ABCA1 kicks into gear and pumps cholesterol from the cell, unloading the potentially harmful substance onto the E and A-I proteins. The resulting protein-cholesterol molecule shapes itself into an HDL particle, which is then whisked away to the liver for disposal. Along the way, the HDL particle mops up still more cholesterol from the arteries. Problem solved.

Unfortunately, this bit of arterial housekeeping short circuits in people with atherosclerosis. Their HDL level remains low and can’t keep pace with ever-thickening deposits of the fatty plaque.

To fight this problem, Bielicki’s team zeroed in on the initial step that sets the HDL-assembly process in motion. Specifically, they determined how the apolipoprotein E and A-I proteins attach themselves to ABCA1.

“This is the rate-limiting step, and generally the slowest one, but when it gets started, the process takes off and everything else falls into place,” says Bielicki. “Now, our research shows how this step works. We have isolated the active binding domains on apolipoprotein E and A-I that interact with ABCA1.”

With this information, they can pinpoint the amino acids involved in the interaction, as well as the molecular forces that allow the proteins to extract cholesterol from arterial walls and form HDL. Their work opens the door for the development of a synthetic molecule that mimics this process — unleashing a powerful snowball effect that cleans dangerously congested arteries.

Copying nature to fight cholesterol deposition isn’t a new strategy. An artificial HDL variant under development by Pfizer Inc. has been found to reduce atherosclerosis in people. But this approach has drawbacks. It requires a large protein composed of hundreds of amino acids that is expensive and time-consuming to synthesize. In addition, because it is a variant protein and large in size, it could trigger an immunological response. And HDL has many roles besides ridding the arteries of cholesterol. This means that a synthetic HDL particle designed to mop up cholesterol will likely get sidetracked by other reactions, limiting its potency.

Instead, Bielicki proposes a much smaller protein composed of only a few amino acids that selectively targets the initial steps of HDL assembly. It would not become entangled in the many other roles required of HDL, which would maximize its specificity and potency. And because the synthetic protein is small, it would be cheap to manufacture and affordable to use — a critical characteristic of a drug designed to treat one of the leading causes of illness and death in the U.S. Its small size would also enable it to go unnoticed by the body’s immune system, enabling it to work longer than a larger drug.

“It would be a targeted therapeutic that facilitates cholesterol transport out of the arteries,” says Bielicki. “The plan is to figure out how nature works and then devise a therapeutic.” This research has resulted in several Berkeley Lab patents that have been licensed.

Director Chu’s Column

Talking Together Over Brown Bags

Soon after I arrived at Berkeley Lab in 2005, I sought to understand the type of workplace we had here. The best way I knew to get a real sense of what was going on was to talk to the people “in the trenches” who were doing so much of the work.

So I arranged a series of lunchtime “brown bags” in the lower cafeteria — small group discussions with no agenda and no members of upper management present. These informal conversations, which I have attempted to maintain on at least a one-per-month schedule, have been invaluable to me in finding out about people’s thoughts and concerns about Berkeley Lab and the way it works (or doesn’t).

I have been pleasantly surprised at how candid and forthcoming people have been with me. I sometimes joke that the brown bags are a “subversive” way of finding out what’s really going on. By that, I mean that the brown bags give me a reality check on what I hear through normal channels. In the same way, I am grateful to have the opportunity to explain programs and management decisions that employees might want to know more about.

Over the course of more than a dozen of these brown bags (we rotate them across the divisions and departments), the topics of conversation have included science, federal policy, workplace conditions, budgets, the energy challenge, and the nuts-and-bolts of everyday tasks. I take these inputs back with me to my senior managers and, when necessary, ask them to follow up on issues that need attention.

Another initiative I embraced came from the Diversity Best Practices Committee, which had a desire to take the pulse of the workplace and determine if the environment is conducive to enabling the best science and the highest employee productivity. A first-ever workplace climate survey was developed and offered last summer, and an impressive one-third of all employees, including 60 percent of all career employees, filled out the survey.

Now we are in the process of interpreting what those results mean. Although the overall results were generally favorable in terms of how employees feel about the Lab, a few responses merit closer attention in areas such as career advancement, decision-making, and management efficiency.

To explore these attitudes more fully, I scheduled three employee brown bags for this month, two of which were held this week and another scheduled for next Tuesday. If you have not yet participated but wish to share your views about the workplace, please join me at noon in Perseverance Hall for our final discussion.

And as I get around to all the divisions in my monthly brown bag schedule, I hope to talk with many of you in a continuing dialogue about how to make Berkeley Lab the best that it can be.

Introducing the Lab's New Officers for Human Resources, Information Technology

They come to Berkeley Lab from vastly different disciplines, but the two women hired recently to head up the Information Technology and Human Resources functions share some common goals as they address the challenges of a Lab redefining itself and its energy mission. Rosio Alvarez, the Chief Information Officer, and Vera Potapenko, Chief Human Resources Officer, both cite the Lab’s scientific excellence and highly dedicated staff as a principle draw in luring them from their successful careers across the country to join the Lab community.

Rosio Alvarez — Chief Information Officer

Alvarez

Photo by Roy Kaltschmidt, CSO

Alvarez, who was born and raised in Los Angeles, says she had no inclination to return to the west from her job as head of the IT Division for the University of Massachusetts, Amherst, but the opportunity at the Lab seemed “too good to be true.” She saw the Lab as a perfect opportunity to work exclusively with scientists who were at the top of their field, without the additional demands of serving students and staff as she had at Amherst.

Alvarez acknowledges there are immediate challenges ahead for the IT Division and the Lab. “Too many brilliant ideas and too few dollars to fund them,“ she says. Still, she is intrigued by the promise of computational science and the potential long-term contributions IT can make to the Lab’s scientific enterprise.

“As many have suggested, computing is the third pillar of science — theory, experiment and computation. I’d like to see the IT division play a leading role in supporting computation at the Lab,” Alvarez says. She points out that in the past, researchers needed access to unique instruments in order to gather data and make discoveries. Now, the growing costs and complexity of tools prohibit proliferation of instruments. Instead, there are a few unique instruments producing data, for example the Large Hadron Collidor, with that data housed in a few locations.

“The result is that now discovery is based on good questions rather than access to unique tools,” Alvarez says. “So we go from hypothesis-driven science: ‘I have a question, let me find data,’ to exploratory-driven: ‘I have data, what can I glean from it?’ And we have plenty of data, at the petascale level now. So it is much more about access, movement and management of very large amounts of data: the Petascale Challenge.”

“IT is critical to achieving these goals, it’s not an afterthought or simply a utility as some suggest; it is about innovation and creation if you want to achieve excellence in computational science.”

Are there particular challenges that face a woman in the IT world? “There is no doubt that IT is a male-dominated field that is often hostile toward women,” she says, “but I’ve learned to establish strong working relationships with men and women in the field who are supportive of me independent of gender or other social categories.”

Vera Potapenko — Chief Human Resources Officer

Potapenko

Photo by Roy Kaltschmidt, CSO

Coaxed from her job at Northwestern University after more than 30 years in the Chicago area, Vera Potapenko found herself drawn to Berkeley by the opportunity to work with brilliant and dedicated employees, as well as the challenge of helping the Lab navigate to meet its new institutional goals in energy research.

Potapenko has worked in both public and private industry, ranging from pharmaceuticals to academia.

What are some of the HR challenges she sees ahead for Berkeley Lab? Recruitment and retention of talent, Potapenko says. “We deal in intellectual capital. We are only as good as our people; they are our competitive advantage.” In that same vein, Potapenko sees diversity as a major goal of recruitment. She wants to build a consciousness about the value and importance of recruiting a diverse, talented workforce into the culture of each division.

“We should all be asking, ‘How do we tap into the pipeline, keep track of where talented minorities and women are in the education chain, keep a shortlist of existing talent, know what they are doing, and keep in touch with them?’” Recruitment, like retention of talent, is very individual, she says, and very personal. It’s letting people know they’re valued.

As for navigating a changing laboratory landscape, Potapenko employs three principles. The first is communication, making sure that employees have the information they need from HR, and that HR communicates well and often at the local as well as division level. She has already met with Lab division directors and senior managers to understand their needs, and she wants to make sure her department is accessible to all employees.

The next step is visibility — getting out into the Lab community to understand what is important to employees, what their issues are, what’s working and what isn’t. Potapenko wants to reach deep into the divisions to learn the challenges and concerns of employees. Her goal is to get to know the staff and provide them access to her, both individually and in small groups.

Finally, there is involvement. Potapenko is eager to create opportunities to involve lab staff in any HR initiatives that have lab-wide impact. She has already spent time working with her own staff on areas that need improvement, learning about their experiences, building a cohesive team that is well equipped to go out into the Lab community and get involved in fixing the processes that need fixing.

What about the challenges facing women at the Lab? Potapenko believes the most important challenge for women in science is to reach out to each other to build a strong community in order to provide a network of support for their issues and needs. There is strength in numbers, she says. That community will go a long way in building consensus about advancements that need to be made for women. And consensus is one of the principles Potapenko values most.

Berkeley Lab View

Published once a month by the Communications Department for the employees and retirees of Berkeley Lab.

Reid Edwards, Public Affairs Department head

Ron Kolb, Communications Department head

EDITOR

Pamela Patterson, 486-4045, pjpatterson@lbl.gov

Associate editor

Lyn Hunter, 486-4698, lhunter@lbl.gov

STAFF WRITERS

Dan Krotz, 486-4019

Paul Preuss, 486-6249

Lynn Yarris, 486-5375

CONTRIBUTING WRITERS

Ucilia Wang, 495-2402

Allan Chen, 486-4210

David Gilbert, (925) 296-5643

DESIGN

Caitlin Youngquist, 486-4020

Creative Services Office

Berkeley Lab

Communications Department

MS 65, One Cyclotron Road, Berkeley CA 94720

(510) 486-5771

Fax: (510) 486-6641

Berkeley Lab is managed by the University of California for the U.S. Department of Energy.

Online Version

The full text and photographs of each edition of The View, as well as the Currents archive going back to 1994, are published online on the Berkeley Lab website under “Publications” in the A-Z Index. The site allows users to do searches of past articles.

Flea Market is now online at www.lbl.gov/fleamarket

Award-winning Plant Biologist Comes to Lab

Shrinking polar icecaps and rising prices at the gas pumps have put Americans on notice that we must end our dependence on petroleum and develop carbon-neutral and renewable sources of fuel to meet our transportation energy needs. The task is daunting, but if you talk with Chris Somerville, a new addition to the Physical Biosciences Division (PBD) who played a key role in procuring the BP grant for the Energy Biosciences Institute (EBI), you’ll find ample reasons to be optimistic.

“Currently, total annual global energy consumption is about 13 terawatts (13 trillion watts) and is projected to nearly double by 2050,” says Somerville. “This represents only a tiny fraction of the nearly 100,000 terawatts of solar energy available to us each year, which makes me optimistic that we will eventually learn how to obtain most or all of our energy from sunlight.”

Somerville

Somerville, 59, is an award-winning plant biochemist who directs the Carnegie Institution’s Department of Plant Biology, a non-profit scientific researh organization located on the campus of Stanford University. He’s also a professor in Stanford’s Department of Biological Sciences. He joined the Berkeley Lab staff this past August at the invitation of PBD director Jay Keasling, but it was Berkeley Lab Director Steve Chu who inspired him. “I share Steve’s belief that global climate change is the biggest scientific challenge of the 21st century and I wanted to join him in his effort to help turn the situation around,” Somerville said.

Like Chu, Somerville is a strong public advocate of the potential of fuels derived from plant biomass. He is a leading authority on the structure and function of plant cell walls, which comprise most of the body mass of higher plants.

The main component of plant cell walls is lignocelluose, a tough, woody combination of polysaccharides and lignin. It is widely believed that producing ethanol and other biofuels from lignocellulose could be far more energy-efficient and environmentally benign than the current technology for producing ethanol from corn.

“It makes much more sense to derive ethanol from the entire body of the plant rather than just the grain because the yield of sugar per unit of land per year will be much higher,” Somerville has said.

The key to implementing the biofuels option, he says, will be the use of highly productive C4 perennial grasses, such as Miscanthus and switchgrass. C4 grasses feature exceptionally high rates of photosynthesis and water-use efficiencies, which means they can produce more biomass than species such as alfalfa or poplar that lack the physiological and biochemical characteristics that define C4 types.

Like ethanol made from corn, cellulosic ethanol is produced by extracting fermentable sugars from the feedstock. Crucial to the technology’s future economic successwill be finding the most effective combination of enzymes to decompose lignocellulose into sugars.

“Existing catalysts and processes do not achieve anything near theoretical efficiency,” Somerville says. “Thus, making cellulosic ethanol commercially viable is essentially an engineering problem in which we must learn to make the overall process more cost effective through a series of process innovations.”

Upon joining Berkeley Lab, Somerville’s expertise was put to immediate use as he helped write the proposals for both the EBI and the Joint BioEnergy Institute (JBEI), another major collaborative competition, sponsored by the U.S. Department of Energy (DOE). He is well-versed at putting together major research projects, having been the chair of the Arabidopsis Genome Initiative, an international consortium that sequenced the first plant genome, and one of the founders of The Arabidopsis Information Resource (TAIR), a database on Arabidopsis thaliana. Related to the mustard plant, Arabidopsis, thanks in large part to Somerville’s efforts, now serves as a model organism for plant and molecular genetics.

Somerville is a native of Canada who earned all of his degrees from the University of Alberta, where he also met Shauna, his wife of 31 years. She, too, is a plant biologist, an Arabidopsis expert, and they have adjacent laboratories at Stanford. They became U.S. citizens in 1995.

President Bush, in his last State of the Union address, called for the production of 35 billion gallons a year of renewable and alternative fuels by 2017. Somerville has gone on record stating that the president’s goal is “technically possible.” Here at Berkeley Lab, through his own science and his leadership role at EBI, he will help transform this technical possibility into commercial reality.

The Helios Story: A Performance in Three Acts

Chu, Alivisatos, Keasling to Speak at Berkeley Repertory Theatre

Members of the Berkeley Lab and local communities have been reading a lot lately about the process of global warming, the impact of carbon-based energy on the environment, and the Lab and campus partnerships seeking new forms of alternative fuels and energy efficiency.

Now they can hear about it for themselves.

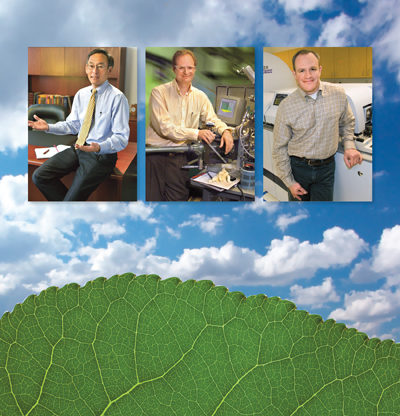

From left to right, Chu, Alivisatos, and Keasling

Another special partnership, this one between the Berkeley Lab Friends of Science and the Berkeley Repertory Theatre, has resulted in a “Science at the Theatre” series of free springtime public talks. The last three in the series are devoted to the Lab’s Helios project, including the many initiatives under its umbrella. This broad effort aims to develop solar-based, carbon-neutral, affordable energy while reducing overall consumption in an environmentally responsible way.

Included within the Helios portfolio is the Energy Biosciences Institute (EBI), a collaboration between energy giant BP and the researchers at Berkeley Lab, UC Berkeley and the University of Illinois.

The recognized captain of this alternative-fuels journey, Lab Director Steve Chu, will set the stage with his April 23 talk on “The Energy Problem: What the Helios Project Can Do About It.”

He will be followed by Paul Alivisatos, nanotechnology pioneer and the Lab’s Associate Director for Physical Sciences, whose topic will be “Nanoscience at Work: Creating Energy from Sunlight” on May 14.

And Jay Keasling, Division Director for Physical Biosciences and Discover Magazine’s “Scientist of the Year” in 2006, will address “Renewable Energy from Synthetic Biology” on June 4.

All three talks are on Monday nights from 5:30 to 7 p.m. in the Berkeley Rep’s 600-seat Roda Theatre.

If the opening talk of the “Science at the Theatre” series is any indication, one is advised to arrive early to be assured of a seat. Astrophysics Nobel Prize winner George Smoot addressed close to 500 avid listeners on March 5 about his investigations of the “Big Bang” and his discovery of the relic radiation traces of creation of the universe.

Co-sponsors of the community lecture series include the UC Berkeley campus, Chabot Space and Science Center, The Exploratorium in San Francisco, and the science departments of Berkeley, Oakland and Albany high schools.

Star-Gazing Across an Ocean: the Keck Remote Observing Facility

Kyle Dawson, Joshua Meyers, and Rahman Amanullah in Bldg. 50Bs Keck Remote Observing Facility

Kyle Dawson, Joshua Meyers, and Rahman Amanullah in Bldg. 50Bs Keck Remote Observing Facility

Hawaii, Feb. 21, 3:30 in the morning: Nao Suzuki and Rahman Amanullah, two Berkeley Lab postdocs, are chasing supernovae, using one of the twin Keck telescopes on Mauna Kea. An observing assistant (OA) occasionally dashes out of the control room into the frigid air, 4,205 meters above sea level, to guess at the changing weather.

All night the sky has been veiled with broken cirrus, shrouding the night’s original targets: candidate Type Ia supernovae, the standard candles the Supernova Cosmology Project uses to measure the expansion history of the universe. Suzuki and Amanullah have had to turn to brighter objects, the host galaxies of nearby supernovae, visible through the clouds.

Two hours before dawn the sky begins to clear. Amanullah reaches for the chart for 07D3cc, a candidate found days earlier by the SuperNova Legacy Survey (SNLS). After three lengthy exposures — each unfortunately dimmer than the one before it — dawn brightens the sky. Is the spectrum bright enough to identify the type and redshift of 07D3cc? The answer will have to wait for data analysis.

So ended a typical midwinter night of observing, with one wrinkle: neither observer was at the telescope. Suzuki was sitting in Keck Headquarters in Waimea, a town 32 kilometers from the summit, and Amanullah was 3,800 kilometers away in Berkeley Lab’s Keck Remote Observing Facility, a windowless room deep in the bowels of Bldg. 50B.

On the night of Feb. 20, Nao Suzuki was the only Berkeley Lab observer in Hawaii

On the night of Feb. 20, Nao Suzuki was the only Berkeley Lab observer in Hawaii

Virtual Network Computing (VNC) windows, running simultaneously in all three locations, controlled the settings of the telescope and its instruments. With a few clicks on the keyboard, either Suzuki in Waimea or Amanullah in Berkeley could change filters, set exposure times, open the spectrograph’s shutters and, with the OA’s cooperation, move the 300-ton telescope to pinpoint a distant supernova in a field of stars.

Tony Spadafora of the Physics Division is the project’s scientific coordinator. “Not so long ago we’d have to send two or three people to Hawaii for one night’s observing,” he says. “Now we send one.”

Deb Agarwal and Craig Leres of the Computational Research Division built the system. The Keck’s remote-operations coordinator Bob Kibrick of UC Santa Cruz provided the guidelines: the remote observing room can be used for no other purpose, and communications with the telescope have to be over a dedicated subnet, firewalled from possible interference. By using spare high-resolution monitors and multi-monitor graphics cards, Agarwal and Leres were able to get the system up and running in just two weeks in October, 2005.

Some of the Keck’s remote observing sites in California are designated “mainland only,” meaning nobody needs to go to Hawaii. Berkeley Lab operates in “eavesdrop” mode: an expert observer must be present in Waimea. Mainland-only status will require new equipment, including back-up communications. Even in eavesdrop mode the facility has benefited both the Lab and UC Berkeley.

“Most important has been training students,” says Spadafora. “Another enormously helpful aspect has been the availability to campus astronomers.”

Whether 32 kilometers away or 3,800, remote observing presents its own challenges. When setup for the night of Feb. 20-21 began at 2 p.m. Hawaii time, 4 p.m. in Berkeley, the Virtual Network Computing windows were not responding.

In Berkeley, Agarwal was at the keyboard and on the phone with UCSC’s Kibrick. “There are various ways to start the VNC servers,” she explained later. “The method they chose that night did not leave them in a functional state.” Kibrick taught the observers how to fix the problem if it ever recurs.

Spadafora and Physics postdoc Kyle Dawson were also in the tiny room. Dawson, trained on the Keck and the remote facility’s most experienced observer, was there to introduce Amanullah to its operation. Joshua Meyers, a graduate student Dawson had recruited into the Supernova Cosmology Project, would analyze the supernova data acquired that night.

With the VNCs operational again, Dawson in Berkeley and Suzuki in Waimea discussed target options, given the iffy weather. Once advance preparations were finished, Amanullah was on his own.

Tony Spadafora is scientific coordinator of the Labs Remote Observing Facility, which was assembled by Deb Agarwal

Tony Spadafora is scientific coordinator of the Labs Remote Observing Facility, which was assembled by Deb Agarwal

“Winter nights are longer, but on Mauna Kea February is the worst month for weather,” says Suzuki. “Even with high-tech instruments, ground-based telescopes are always fighting the weather.” Despite the difficulties, he and Amanullah hoped they had captured an early morning prize, SNLS candidate 07D3cc.

Back in Berkeley, Suzuki and Meyers converted the raw data into a faint spectrum. Meyers says, “I cycled through every supernova template I had” — some 350 individual templates — “and found the best match.” Supernova 07D3cc was indeed a Type Ia, with a redshift of 0.7, roughly nine and a half billion light years away.

SNLS is constructing a Hubble diagram showing the history of the expansion of the universe, which will include 500 Type Ia supernovae. Says Suzuki, “This hard-won supernova is going to be one data point among those 500.”

Lorentz Revelation Aids Physics Computations

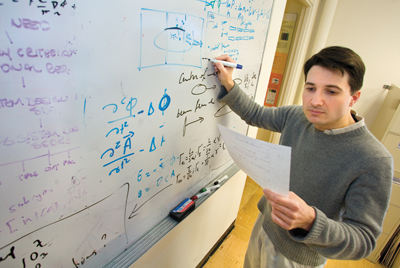

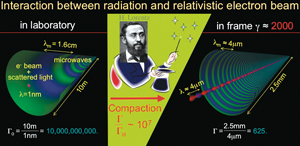

A new mathematical discovery could reduce by orders of magnitude the number of computational operations required to solve highly complex problems in physics. Jean Luc Vay, a physicist with Berkeley Lab’s Accelerator and Fusion Research Division (AFRD) has found a surprisingly simple way to use the Lorentz transformation, the set of equations that forms the mathematical basis for Albert Einstein’s theory of special relativity. Among other possibilities, Vay’s technique could make the future design of free electron lasers and particle accelerators a faster and more accurate process.

AFRD physicist Jean Luc-Vay has found a way to reduce by orders of magnitude the number of computational operations required to solve highly complex problems in physics. Photo by Roy Kaltschmidt, CSO

AFRD physicist Jean Luc-Vay has found a way to reduce by orders of magnitude the number of computational operations required to solve highly complex problems in physics. Photo by Roy Kaltschmidt, CSO

The equations in the Lorentz transformation, named for the Dutch physicist Hendrik Lorentz, describe the relationship between the space and time coordinates of two systems moving at a constant speed relative to each other. In most cases, these equations are calculated from an “at rest” frame of reference, that is, the coordinates are described from the view of a stationary observer in the laboratory. For modeling interactions between particles or between particles and radiation, calculating the interactions from a stationary reference frame can require hours or even months of time on the most powerful of supercomputers.

And that’s often with using approximations to the fundamental equations.

The interaction of relativistic objects, however, need not be viewed from a frame of reference at rest in the laboratory. Instead, it can be viewed from any of an infinite number of moving frames of reference. According to Vay, within this infinite number of moving frames will be one that minimizes the range of space and time scales on which the objects interact. Find this optimal moving reference frame, Vay says, and you can reduce the number of calculations required to model the interactions.

“This conclusion, despite being a direct consequence of special relativity, is not widely known, even by many physicists,” said Vay. “By describing the space and time coordinates from the point of view of a moving observer, rather than a stationary observer, relativistic effects set in and there is, for each object, depending on its velocity in the laboratory, a contraction or dilation of space and time. As a result, we should be able to tackle mathematical problems that have been unmanageable, and we should also be able to put much more detail into problems for which computer resources have been barely adequate.”

The key to Vay’s success is that contrary to conventional scientific wisdom, which holds that under a Lorentz transformation the “complexity” of a system is invariant, when a system is observed from a moving frame of reference, it actually does become — from a “certain point of view” — less complex. For very complicated reasons, this reduced complexity makes it possible to reduce the computations required to describe such systems.

“It is very counterintuitive,” Vay said, “but even for physicists, relativity is counterintuitive enough to be confusing.”

Vay’s Lorentz transformation discovery will be reported in an upcoming issue of the journal, Physical Review Letters, in a paper entitled “On the Non-Invariance of Space and Time Scale Ranges Under Lorentz Transformation, and its Implications for the Study of Relativistic Interactions.”

Vay made his discovery while working on several projects, including one involving a free electron laser (FEL). In an FEL, a beam of electrons accelerated to near light speed is sent through an array of magnets, called a “wiggler,” which features alternating north and south poles. The alternating poles cause the electrons to oscillate back and forth, radiating light in the process. Modeling without approximations the interaction of electrons and radiation, including the output light, during the single pass of an electron beam through an FEL 10 meters in length would normally be impractical even on a supercomputer. However, by invoking a moving observer, Vay was able to select a reference frame that reduces the number of required arithmetical operation cycles from 10 billion to 625.

“In a proof-of-principle FEL calculation, I was able to reduce the number of operation cycles from 100 million to 2,000 and do the calculations on my laptop computer,” said Vay.

Using Hendrik Lorentzs transformation equations to model the interaction between radiation and a relativistic electron beam requires 10 billion computational operations when the frame of reference is a stationary observer in the laboratory. If a moving observer is invoked, a reference frame can be selected that reduces the number of required computational operations to 625.

Using Hendrik Lorentzs transformation equations to model the interaction between radiation and a relativistic electron beam requires 10 billion computational operations when the frame of reference is a stationary observer in the laboratory. If a moving observer is invoked, a reference frame can be selected that reduces the number of required computational operations to 625.

Another physics problem involving huge numbers of calculations is the interaction of accelerated particles with clouds of electrons. Take for example, the International Linear Collider (ILC), a proposed accelerator that would create high-energy collisions between beams of electrons and positrons, their antimatter counterparts, in order to answer questions about the fundamental nature of matter, energy, space and time. The answers to such questions might be found in the aftermath of the ILC collisions. However, electron clouds, formed when the electron and positron beams are bent in magnetic fields, can diffuse the positron beam so that many fewer collisions occur.

“Electron clouds are a huge issue for the most recent particle accelerators, but especially so for future ones like the ILC,” Vay said. “By finding the reference frame which minimizes the range of space and time scales, we can greatly simplify the calculations of the fundamental mechanisms we need to study. The system to be studied becomes more compact spatially, the time of interaction is shorter, and the ratios between largest to smallest space and time scales are minimized.”

Still another area in which Vay’s new technique could play an important role is laser-plasma wakefield acceleration. The idea here is that high-powered beams of laser light in combination with a highly dense plasma can energize electrons at a thousand-fold faster rate than today’s microwave-based accelerator technology. The goal would be supercompact high-energy particle accelerators. For example, one trillion electron volts of energy could conceivably be reached in a machine only 30 meters long.

“Modeling the physics in a laser-plasma wakefield acceleration system will still require the use of a supercomputer even with the compaction of scale ranges, but instead of approximations we could examine the full range of physics, and perform hundreds of runs in the same amount of computer time we now need for a single run,” Vay said. “The concept of a laser-plasma wakefield accelerator becomes much more promising if we can calculate or reckon all of the physics in the system’s design.”

Lab Team Helping Smooth Flow of Water Data

A collaboration among Microsoft, Berkeley Lab and UC Berkeley is underway to make it easier for researchers to access and analyze collected data on water, with the goal of accelerating research in the increasingly important areas of water supply and climate change.

Called Microsoft e-Science, the project is part of the Berkeley Water Center’s effort to marshal expertise from public institutions and the private sector to enable researchers to easily access and work with water data. The year-old center is the brainchild of Berkeley Lab’s Computational Research Division (CRD), UC Berkeley’s College of Engineering and UC Berkeley’s College of Natural Resources.

Front row, Microsoft's Catharine van Ingen (left) and Stuart Ozer. Second row (l-r) Matt Rodriguez, Deb Agarwal, and Monte Good with the Computational Research Division.

Front row, Microsoft's Catharine van Ingen (left) and Stuart Ozer. Second row (l-r) Matt Rodriguez, Deb Agarwal, and Monte Good with the Computational Research Division.

Local, state and federal governments have long collected detailed information about water supplies, such as measuring river flows and water content in winter snows. They use the data to make allocation decisions for farms, businesses and residential consumers. However, these agencies use different methods to collect and archive data, posing a challenge for scientists who need to retrieve and integrate all those datasets in order to carry out comprehensive analyses.

The e-Science project strives to ease that headache for scientists. The project team already has developed a prototype data server, which runs on Microsoft SQL Server 2005. The team is now testing the system by loading data about Northern California’s Russian River watershed collected by various agencies.

“Because of the differences in the data, the loading of each data file presents a new challenge, and matching data across different data sets is difficult,” said Deb Agarwal, head of CRD’s Distributed Systems Department and the Berkeley Water Center’s IT Advisor. “There is a perception that once the data is in an archive, science is enabled on a grand scale. But data availability is only the first step in the process.”

The project team has already demonstrated the prototype server to the scientific community. For example, at the FLUXNET Synthesis Workshop in Italy last month, project team members Matt Rodriguez, a CRD scientist, and Catharine van Ingen from Microsoft showed scientists how they could use the server to find and plot cross-network data in minutes, rather than days. The data to be analyzed was 600 site-years of data, most of which had not been used before in cross-site analysis. Through use of the data server scientists can spend time exploring the data rather than collating them.

At the European Geosciences Union General Assembly 2007 in April, Agarwal, van Ingen and Dennis Baldocchi from UC Berkeley will discuss the server and their support of its users.

Microsoft’s support is critical because about 90 percent of the researchers accessing these data archives use Windows-based computers. Van Ingen brings expertise from her work as an engineering professor and software expert, as well as a Microsoft insider who knows where to turn for help in the company.

Developing the prototype server was an important milestone for the project. To build it, the project team started with the data archive of the AmeriFlux network of 149 research towers located around the Americas.

Using arrays of sensors, the towers provide continuous observations of ecosystem-level exchanges of CO2, water and energy, essentially recording how the ecosystem “breathes.” The AmeriFlux archive currently contains 192 million data points stored as hundreds of files.

Researchers analyzing this data currently download a copy of the data for use in local analysis. Since the data is continually being updated and corrected, each researcher typically ends up with a different version.

Data on the Russian River was added to the prototype server

Data on the Russian River was added to the prototype server

Working with Van Ingen and another expert from Microsoft, Stuart Ozer, Agarwal and her staff, Rodriguez and Monte Goode, designed the server to make the AmeriFlux data easier to use. The approach incorporated a database and a “data cube,” a type of database structure optimized for data mining.

While developing the server is a major part of the project, the long-term goal is to develop a portable system that can be maintained by the researchers themselves.

“Right now we’re at the edge of computer science and research, where we are developing tools that we hope will make this data server a natural research tool, a kind of ‘collaborative data server in a box,’ for science,” Agarwal said.

Learn more about the Berkeley Water Center at http://www-esd.lbl.gov/BWC/.

Berkeley Lab Science Roundup

Not quite flatland

Suspended graphene membrane

Suspended graphene membrane

Although long studied theoretically as a single layer of graphite, naked sheetlike graphene, its carbon atoms arranged hexagonally in the plane, was not found in the real world until 2004. Led by Jannik Meyer of the Materials Sciences Division, a team including graphene’s discoverers Andre Geim and Kostya Novoselov suspended tiny sheets of the material initially thought to be the first example of a perfect 2-D crystal from a microscopic scaffolding and examined them under an electron microscope. In the Feb. 12 Physical Review Letters they report that the membranes, although only one atom thick, invariably twist by several degrees and their surfaces have corrugations up to a nanometer out of the plane. Thus graphene is merely a “nearly perfect” 2-D crystal, residing in 3-D space.

Hydrogen bomblet

McCurdy, left, and Rescigno

McCurdy, left, and Rescigno

Quantum theory makes it conceivable to calculate the behavior of subatomic particles from “first principles” — without depending on experiment — but for most of the last century the simplest interactions remained too complicated for even the biggest supercomputers. Late in 2005, the Chemical Sciences Division’s Tom Rescigno and Bill McCurdy and their colleagues reported the first-ever complete quantum mechanical solution of a system with four charged particles, namely, the double photoionization of a hydrogen molecule: one energetic photon in and, wham, two protons, two electrons out. In the Feb. 12 Physical Review Letters the same team reports new results, underlining the crucial role of nuclear motion in calculating just how the hydrogen molecule explodes.

Power surge

Koomey

Koomey

Google’s server farms sit next to the Columbia River, near some of the country’s biggest hydroelectric dams, and that’s no accident. Servers are hot little boxes that need fans to cool them; server farms need electric lights. The power gobbled up by network server facilities at least doubled from 2000 to 2005, according to “Estimating Total Power Consumption by Servers in the U.S. and the World,” a report issued Feb. 15 and commissioned by major server suppliers. Even this startling power surge may be an underestimate, says Jonathan Koomey of the Environmental Energy Technologies Division, the report’s author, because Google builds its own servers, numbering in the hundreds of thousands, from PC motherboards, which aren’t defined as servers by leading market analysts.

Light at the end of the planet

South Pole Telescope

South Pole Telescope

At the South Pole a new telescope, 23 meters tall, 10 meters in diameter, and weighing 254 metric tons, saw its “first light” on Feb. 16 with a look at the planet Jupiter. The millimeter-wavelength South Pole Telescope’s real target is invisible, however: namely dark energy, as revealed by subtler variations in the temperature and polarization of the cosmic microwave background than any yet observed. By studying variations in the CMB as revealed in the growth of massive clusters of galaxies, the telescope team which includes the Physics Division’s Adrian Lee and Helmuth Spieler, as well as current and former postdocs and grad students Nils Halverson, Trevore Lanting, Martin Lueker, and Thomas Plagge hopes to set tight constraints on cosmological parameters and to investigate the nature of dark energy.

The growth of galaxies

Newman

Newman

No, the Extended Groth Strip isn’t that spare tire around your middle, and AEGIS isn’t the goatskin the goddess Athena wore as a symbol of her authority. The Groth Strip is a small region of the sky that takes its name from Princeton cosmologist Edward Groth; AEGIS is the All-wavelength Extended Groth-strip International Survey, soon to be published in Astrophysical Journal Letters. In a five-year study performed by an international group of 50 researchers, including Jeffrey Newman of the Physics Division, AEGIS used four telescopes in space and four on the ground to record the Groth Strip in every possible wavelength from radio on up through x-rays. Each wavelength, says Newman, “provides a little piece of the puzzle of how galaxies evolve.”

Hungry fungus

Pichia stipitis

Pichia stipitis

These days ethanol usually means corn or sugar cane, but future biofuels will need more economical feedstocks, like wood chips and agricultural trash. Nature’s most proficient fermenter of xylose, or “wood sugar,” is a fungus called Pichia stipitis, originally isolated from the guts of wood-eating insects. As reported in Nature Biotechnology on March 4, researchers at the Department of Energy Joint Genome Institute and their colleagues have now sequenced the genome of the hungry fungus and pinpointed some of the key genes that make it effective. JGI’s director, Eddy Rubin, says the knowledge will be used to further improve P. stipitis‘s ability to ferment xylose and possibly to confer the same ability on other organisms like brewer’s yeast.

Manganese demystified

Water-splitting catalytic cycle

Water-splitting catalytic cycle

Until 3.2 billion years ago, there was no free oxygen in Earth’s oceans or atmosphere. Along came primitive bacteria that developed a way to split water molecules into protons, electrons, and oxygen — the key mechanism of photosynthesis, which eventually made life possible for plants and other complex organisms like ourselves. The heart of the water-splitting system was a complex of manganese and calcium ions whose arrangement has remained a mystery until now. Junko Yano, Vittal Yachandra, Yulia Pushkar, Azul Lewis, and Kenneth Sauer of the Physical Biosciences Division combined x-ray absorption spectroscopy and protein crystallography to reveal the manganese complex’s precise structure in the March 9 issue of the Journal of Biological Chemistry, that journal’s “paper of the week.”

Where Has Your PDG Booklet Been?

With the proliferation of blogging, which brings people with a common interest together in an online community, some unusual grassroots efforts have sprung forth. And scientists are among those making a mark.

Case in point: a blog by Fermilab’s Gordon Watts called “Life as a Physicist.” Included on his website is a feature called “Where Has Your PDG Book Been!?”

The PDG (Particle Data Group) booklet — deemed by many to be the bible of physics — has been produced by Berkeley Lab’s Physic’s Division for the past 50 years. Says Berkeley Lab’s Michael Barnett, “It is the most cited publication in particle physics, and perhaps in all of physics.” The booklet is distributed every two years to nearly 31,000 people worldwide.

To honor this legendary, pocket-sized reference manual, Watts encouraged readers of his blog to submit photographs taken of the PDG booklet in exotic locales. The scientific community rose to the challenge with images of the book in such places as Paris, Easter Island, the Mediterranean coast, and Washington D.C. (pictured above and right).

To view additional images, go to www.flickr.com/groups/pdgpictures/pool/. Watt’s blog can be read at gordonwatts.wordpress.com.

City of Berkeley’s Planning Commission Discusses LRDP

The City of Berkeley’s Planning Commission discussed Berkeley Lab’s draft 2006 Long Range Development Plan (LRDP) and its accompanying draft Environmental Impact Report (EIR) at a March 14 meeting.

The meeting, held at the North Berkeley Senior Center, was open to the public and also included members of Berkeley’s Transportation Commission, Landmarks Preserva-tion Commission, and Health Commission. Only the Planning Commission had a quorum of members, meaning only they could develop recommendations to pass on to the City of Berkeley. On this note, the commission drafted a resolution that raised concerns over several aspects of the LRDP, such as transportation management and emergency preparedness, which they will send to the Berkeley City Council. In addition to the commissioners, about 20 people attended the meeting, and eight presented comments.

Berkeley Lab’s draft LRDP establishes a framework of land-use principles to guide Berkeley Lab growth and other physical development through 2025. The draft EIR provides an assessment of the LRDP and its potential effects on the environment. The EIR is undergoing a public review process that ends on March 23.

At the March 14 meeting, Dan Marks, Director of the City’s Plan-ning and Development Department, lauded the fact that Lab staff met with city staff before the LRDP and accompanying EIR were issued on Jan. 22, and reduced the Lab’s growth plans, as well as established a Transportation Demand Management Plan.

However, Marks added that there is room for improvement. Among them, he raised concerns over the level of proposed development and population growth at the Lab’s Berkeley hills site, the aesthetic impact of the proposed buildings, air quality impacts stemming from diesel emissions, impacts to the Strawberry Creek drainage, and the difficulties in responding to a large earthquake. He also expressed frustration over the cumulative impact of Berkeley Lab’s and UC Berkeley’s proposed growth.

All University of California campuses, and Berkeley Lab, are required to maintain and periodically update their LRDPs. A public hearing for the EIR was held on Feb. 26. Approx-imately 30 people attended the public hearing, and 14 provided comments. The Lab will respond in writing to all of the comments received during the comment period in the final EIR. The final LRDP and EIR are expected to be submitted to the UC Regents for approval in the summer or early fall.

If approved, the documents will replace Berkeley Lab’s existing LRDP and EIR, which were initially approved in 1987 (the EIR was updated in 1992 and 1997). Both documents can be accessed at Berkeley Lab’s LRDP website: http://www.lbl.gov/LRDP/.

People, Awards, and Honors

Materials Scientist Receives Cope Award

Jean M. J. Fréchet, with the Lab’s Molecular Foundry, is the winner of the Arthur C. Cope Award, presented by the American Chemical Society. The Cope Award — which recognizes and encourages excellence in organic chemistry — consists of a medal, a cash prize of $25,000, and an unrestricted research grant of $150,000 to be assigned by the recipient to any university or research institution. Fréchet is “the dean of synthetic polymer chemistry in the U.S.,” according to Harvard University chemistry professor George Whitesides. “His influence on the field has been immense, and I give him substantial credit for the fact that polymer science is currently an intellectually robust field,” he says.

Foundry Director Awarded “Scientist of the year”

Carolyn Bertozzi, director of Berkeley Lab’s Molecular Foundry, was named “Scientist of the Year” by the National Organi-zation of Gay and Lesbian Scientists and Technical Professionals. She was honored at a reception during the American Association for the Advancement of Science Annual Meeting in San Francisco last month. Bertozzi was chosen because of her “outstanding achievements in applying chemistry to help answer biological questions related to human health and disease. Her laboratory group studies cell surface interactions in the areas of cancer, inflammation and bacterial infection.”

Governor, Trade Group Laud Energy Expert

Ed Vine, with the Lab’s Environmental Energy Technologies Division, was among a 17-member team honored with an “Outstanding Achievement in Marketing Research and Evaluation” by the Association of Energy Services Professionals. They were recognized for developing the California Energy Program Evaluation Framework and the California Energy Efficiency Program Evaluation Protocols. The team also received a letter from Gov. Schwarzenegger that stated in part, “Your commitment to a sustainable environment further enhances the state’s image as a world leader, but more importantly, will bring about a healthier future for our children and grandchildren. Thank you for your contributions to energy efficiency and greenhouse gas reduction.”

ATLAS Physicist Arguin Wins Dissertation Award

The American Physical Society has selected Berkeley Lab physicist Jean-Francois Arguin as winner of the 2007 Mitsuyoshi Tanaka Dissertation Award in Experimental Particle Physics. The award is presented to “exceptional young scientists who have performed original doctoral thesis work of outstanding scientific quality and achievement in the area of experimental particle physics.” Arguin is currently at CERN, helping to commission the ATLAS detector for its first run later this year. Arguin’s paper was entitled, “Measure-ment of the Top Quark Mass with In Situ Jet Energy Scale Calibration Using Hadronic W Boson Decays at CDF-II.”

Energy Alliance Names Meier as ‘Unsung Hero’

The Alliance to Save Energy (ASE) in Wash-ington has presented its annual “Unsung Hero” of energy efficiency award to Alan Meier, of the Environmental Energy Technologies Division. In her congratulatory remarks, ASE President Kateri Callahan cited Meier’s “unerring ability to identify important energy-saving opportunities that are so far ahead of their time that they are typically unfundable for the first few years, while Alan manages to get the rest of us to pay attention.”

Veteran Staffer Fills Research Integrity Post

A newly created position, Research and Institutional Integrity Manager, has been filled by Meredith Montgomery, a 24-year Lab administrator and manager. Chief Operations Officer David McGraw established the post to monitor the Lab’s compliance in its policies and actions with University of California Standards of Ethical Conduct. Montgomery serves as the Lab’s Research Integrity, Conflict of Interest, and Investi-gations Manager, working with the Directorate and Laboratory Counsel. The Lab’s whistleblower program is also under her purview. Most recently, Montgomery served as Group Leader for Investigations in Internal Audit.

Symposium to Honor Chemical Scientist

The campus will host a symposium honoring the 70th birthday of William Lester, a UC Berkeley chemistry professor and researcher with Berkeley Lab’s Chemical Sciences Division, March 27-31. Lester is best known for extending the powerful quantum Monte Carlo method to the range of chemical problems that form the traditional domain of quantum chemistry.