Scientists

Unravel a Photosynthesis Mystery

It’s Official:

UC Will Compete for Lab Contract

Scientists Unravel a Photosynthesis Mystery

Another important piece to the photosynthesis puzzle is now in place. Berkeley Lab and UC Berkeley researchers have identified one of the key molecules that help protect plants from oxidation damage as the result of absorbing too much light.

The researchers determined that when chlorophyll molecules in green plants take in more solar energy than they are able to immediately use, molecules of zeaxanthin, a member of the carotenoid family of pigment molecules, carry away the excess energy.

Graham Fleming (left), Nancy Holt and Kris Niyogi of the Physical

Biosciences Division have identified a key molecule in the photo-protection

mechanism of green plants.

Graham Fleming (left), Nancy Holt and Kris Niyogi of the Physical

Biosciences Division have identified a key molecule in the photo-protection

mechanism of green plants.

This study was led by Graham Fleming, director of Berkeley Lab’s Physical Biosciences Division and a chemistry professor with UC Berkeley, and Kris Niyogi, who also holds joint appointments with the two institutes. The results are reported in the Jan. 21, 2005 issue of Science. Co-authoring the paper with Fleming and Niyogi were Nancy Holt, plus Donatas Zigmantas, Leonas Valkunas and Xiao-Ping Li.

Through photosynthesis, green plants are able to harvest energy from sunlight and convert it to chemical energy at an energy transfer efficiency rate of approximately 97 percent. If scientists can create artificial versions of photosynthesis, the dream of solar power as a clean, efficient and sustainable source of energy for humanity could be realized.

A potential pitfall for any sunlight-harvesting system is that if the system becomes overloaded with absorbed energy, it will likely suffer some form of damage. Plants solve this problem on a daily basis with a photo-protective mechanism called “feedback de-excitation quenching.“ Excess energy, detected by changes in pH levels (the feedback mechanism), is safely dissipated from one molecular system to another, where it can then be routed down relatively harmless chemical reaction pathways.

Said Fleming, “This defense mechanism is so sensitive to changing light conditions, it will even respond to the passing of clouds overhead. It is one of nature’s supreme examples of nanoscale engineering.”

The light harvesting system of plants consists of two protein complexes: Photosystem I and Photo-system II. Each complex features antennae made up of chlorophyll and carotenoid molecules that gain extra “excitation” energy when they capture photons. This excitation energy is funneled through a series of molecules into a reaction center where it is converted to chemical energy. Scientists have long suspected that the photo-protective mechanism involved carotenoids in Photosystem II, but, until now, the details were unknown.

Said Holt, “While it takes from 10 to 15 minutes for a plant’s feedback de-excitation quenching mechanism to maximize, the individual steps in the quenching process occur on picosecond and even femtosecond timescales. To identify these steps, we needed the ultrafast spectroscopic capabilities that have only recently become available.”

The Berkeley researchers used femtosecond spectroscopic techniques to follow the movement of absorbed excitation energy in the thylakoid membranes of spinach leaves, which are large and proficient at quenching excess solar energy. They found that intense exposure to light triggers the formation of zeaxanthin molecules, which are able to interact with the excited chlorophyll molecules. During this interaction, energy is dissipated via a charge exchange mechanism in which the zeaxanthin gives up an electron to the chlorophyll. The charge exchange brings the chlorophyll’s energy back down to its ground state and turns the zeaxanthin into a radical cation which, unlike an excited chlorophyll molecule, is a non-oxidizing agent.

Green plants use photosynthesis to convert sunlight to chemical energy,

but too much sunlight can result in oxidation damage.

Green plants use photosynthesis to convert sunlight to chemical energy,

but too much sunlight can result in oxidation damage.

To confirm that zeaxanthin was indeed the key player in the energy quenching, and not some other intermediate, the Berkeley researchers conducted similar tests on special mutant strains of Arabidopsis thaliana, a weed that serves as a model organism for plant studies. These mutant strains were genetically engineered to either over-express or not express at all the gene, psbS, which codes for an eponymous protein that is essential for the quenching process (most likely by binding zeaxanthin to chlorophyll).

“Our work with the mutant strains of Arabidopsis thaliana clearly showed that formation of zeaxanthin and its charge exchange with chlorophyll were responsible for the energy quenching we measured,” said Niyogi. “We were surprised to find that the mechanism behind this energy quenching was a charge exchange, as earlier studies had indicated the mechanism was an energy transfer.”

Fleming credits calculations performed on the supercomputers at the National Energy Research Scientific Computing Center (NERSC), under the leadership of Martin Head-Gordon of the Chemical Sciences Division, as an important factor in his group’s determination that the mechanism behind energy quenching was an electron charge exchange.

“The success of this project depended on several different areas of science, from the greenhouse to the supercomputer,” Fleming said. “It demonstrates that to understand extremely complex chemical systems, like photosynthesis, it is essential to combine state-of-the-art expertise in multiple scientific disciplines.”

There are still many pieces of the photosynthesis puzzle that have yet to be placed for scientists to have a clear picture of the process. Fleming likens the ongoing research effort to the popular board game, Clue.

“You have to figure out something like ‘It was Colonel Mustard in the library with the lead pipe,’” he says. “When we began this project, we didn’t know who did it, how they did it, or where they did it. Now we know who did it and how, but we don’t know where. That’s next!”

It’s Official: UC Will Compete for Lab Contract

As expected, the University of California Board of Regents yesterday officially authorized UC to enter the competition for Berkeley Lab’s next management contract. Proposals are due to the Department of Energy by February 9.

“Lawrence Berkeley National Laboratory and its employees are a critical part of the University of California system and provide a tremendously valuable scientific contribution to our nation,” UC President Robert Dynes said. “Our strong bid will continue our proud tradition of public service and scientific discovery while ensuring that the best management practices are in place at the Lab.

“When one looks over the horizon to the future of science over the next 20 years, the work is going to be extremely exciting, and a UC-managed Berkeley Laboratory under Director (Steve) Chu’s leadership is going to be an extremely exciting place to be.”

Chu expressed his pleasure with the Regents’ decision. “I am gratified that the Board of Regents has decided to authorize competition for the University of California to maintain its long-standing management of Berkeley Lab. Over the years, the excellence of the Lab is due in large part to the fact that we are adjacent to, and close collaborators with, the finest public university in the world.

“The benefit goes both ways. The Lab has been an integral part of the teaching and research mission of the University, and tens of thousands of undergraduate, graduate students and postdoctoral fellows were taught and trained by LBNL employees over the last 50 years. Berkeley Lab has also contributed significantly to the distinction of the Berkeley campus. Ten out of the 14 UC Berkeley faculty who have been recognized by a Nobel Prize in science were or are employees of LBNL. In addition, one-third of the current Berkeley campus faculty in the National Academy of Sciences and one-fifth of the faculty in the National Academy of Engineering are employees of the Berkeley Lab. It is fair to say that both institutions have thrived during our 74-year partnership.”

Under the University’s Berkeley Lab proposal, UC will be the prime contractor and will propose that Lab staff remain as UC employees and as part of the UC system-wide pension and benefits program. Dynes informed the Regents that the University’s proposal for the use of DOE fees that come with the management contract would maximize the benefit to scientific programs and continue the University’s policy of allocating funds for administrative and operating needs while returning the excess fee funds to the Laboratory for additional research by Lab scientists. The proposal will highlight the strengths of the University and its ability to produce future scientific and technological achievements at the Laboratory.

“Today’s action by the Regents will allow Berkeley Lab to continue to be a place of tremendous scientific and technological discovery under UC management,” said Chairman of the UC Board of Regents Gerald L. Parksy. “The Regents fully expect that a strong, winning bid will be submitted to the Department of Energy, building upon UC’s 60-year history of managing this scientific jewel.”

Gadgil, the Cartoon

To the many awards and honors that Ashok Gadgil has received since he developed UV Waterworks — a water purification system that kills bacteria with ultraviolet light — he can now add another: immortalization as a cartoon character.

“It gives me more joy to see myself as a cartoon character than to publish another paper in a refereed journal,” says Gadgil.

It all started last year, when Gadgil, a scientist with the Environmental Energy Technologies Division, received an unexpected phone call. An artist asked if she could use his name and cartoon likeness in a children’s book that teaches kids about environmental engineering.

-- Dan Krotz

Safe Water for Tsunami Victims

WaterHealth International (WHI), the company that manufactures UV Waterworks, has announced that it will ship the devices to tsunami-devastated areas in India and Sri Lanka that lack safe drinking water. To fund this endeavor, a cooperative effort between WHI and several organizations, including the International Finance Corporation, philanthropic entities, and Global Giving, has developed the ability to leverage a $1 tax-deductible contribution into $4 of direct relief in Sri Lanka and India.

UV Waterworks can purify drinking water for a refugee camp or a village of 2,000 for about one cent per person per week. The units are easy to transport. They can be moved from refugee camps to permanent sites for water systems as community reconstruction progresses. Donations can be made at http://globalgiving.com/871 for Sri Lanka and http://globalgiving.com/872 for India.

Congressman Learns How to Probe the Nanoworld

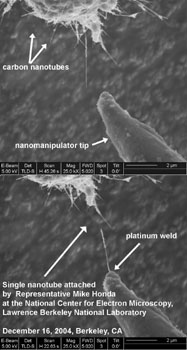

Nothing compares to giving an influential legislator a hands-on experience of research. When he visited Berkeley Lab in December, U.S. Representative Mike Honda from San Jose experienced both extremes in the Lab’s nanotechnology program: the building site of the grand six-story Molecular Foundry, and the microworld of nanomanipulation. After visiting with Lab Director Steve Chu and Foundry Director Paul Alivisatos, Honda went inside the National Center for Electron Microscopy and probably did something that none of his legislative colleagues had ever done — he attached a single nanotube to a tungsten microprobe.

The photos on the bottom right, courtesy of staff scientist Andy Minor at NCEM, encompass an area roughly the width of two red blood cells, or about one tenth the width of a human hair. The left picture shows a bundle of multi-wall carbon nanotubes of various diameters, probably ranging from

2 to 100 nanometers (nm) in width. An FEI Strata 235 Dual-Beam Focused Ion Beam (FIB) at NCEM has been recently augmented with multiple nanomanipulators. The figures show a carbon nanotube bundle being attached to a tungsten microprobe inside the FIB and, with Honda at the controls, the nanomanipulator being extended to make contact with and extract a single nanotube. Nanomanipulation is a crucial aspect of building prototype nanoscale systems such as molecular electronic devices, according to Minor.

Conversation with Director Draws Crowd, Questions

Nearly 100 employees crammed into the Perseverance Hall conference room for the opportunity to listen to and speak with Berkeley Lab Director Steve Chu on January 10, and they departed liking what their new leader had to say. Speaking informally about issues as wide-ranging as America’s energy future and employee retirement plans, Chu held his first of a series of brown bags with Lab employees which reflect what he described as “a different way of doing business.”

“What I’m trying to do is establish a system, not of edicts or directives handed down, but of discussing things and taking advantage of a lot of bright people here who can reach consensus on what we should try to do.”

And discuss things they did, for almost an hour. Among the subjects brought up by his standing-room-only audience, and Chu’s responses, were:

- The Lab’s commitment to addressing global climate change: “The most pressing problem that has to be solved by science is energy, as we go forward toward a C02-neutral energy diet as soon as possible.” Chu, citing a probable 50-year production window for fossil fuels, said one of the main challenges is to find ways to convert solar-based energy into storable, transportable chemical energy. Climate change, he said, is the “wild card.” “The Lab is well-positioned to use its brain power to figure it out. It’s one of the major reasons I took this job.”

- The independence of scientific divisions within his new organization: “They have to have independence, but I would like to increase communications between divisions that lead to collaborations.”

- Homeland Security research: “Yes (the Lab can seek such work), as long as it’s not classified. We shouldn’t go after the money just because it’s there. We have a proud heritage here. It’s the best Office of Science general-purpose lab there is, we have a lot to offer, so we’re good enough that we don’t need to run after every last dollar.”

- The future of the Bevatron: “We are hopeful of getting funds from the Department of Energy in the FY06 budget that will allow us to begin cleaning it up. We have no favored project for that site, but we just want to get rid of [the building]. It looks like the DOE is going to help us do that.”

- His impending review of Operations budgets: “A budget committee will look at costs in all areas. And I want to include both Operations and science. We want to find efficiencies all over that will allow us to do things (that cost money). But we’re not going to undergo massive layoffs in order to build a couple of new buildings.”

Director Steve Chu (front right) led a full-house brown bag discussion

with employees on January 10.

Chu also committed, after a discussion of Laboratory Directed Research and Development (LDRD) funding, that he would carry the message to DOE and Congress of the value of this investment, both strategically and as seed money for exploratory new research.

The issue that created the most dialogue, however, was that of pensions. Employees were reassured that Chu would invest strong personal effort into resolving questions about employee benefits that have arisen as the University of California prepares for management contract competition being held by the Department of Energy.

“Nothing’s cast in concrete,” he told those expressing concerns that their health and retirement benefits might be reduced under a new contract. “I am engaged (in the discussions), and I’m doing my best to inform them (the DOE and UC) of the possible consequences” of various options being discussed.

Chu said he was encouraged by the early responses he has received from DOE officials on this issue. “So far, they have been quite reasonable,” he said. “They do not want their labs to suffer, and they are quite concerned about this. It’s a complicated problem, and we need to get the positions clarified. They (the DOE) are not saying they will cut benefits. They are just concerned about future costs and their own liability, and they want wiggle room for input in the future. That is not an unreasonable stand.”

The employee concerns were triggered by a clause in the Request for Pro-posals for management of the Berkeley Lab contract, which includes a provision that benefits shall be maintained “at a reasonable cost” by the contractor, and that “cost reimbursement of benefit plans will be based on contracting officer approval of contractor actions pursuant to an approved ‘employee benefit value study’ and an ‘employee benefit cost survey comparison.’” The goal of the RFP is “to allow the DOE to move to a net benefit value and per capita cost not to exceed a yet-to-be-defined comparator group by more than five percent,” Chu said.

Since the UC retirement system has been so successful in recent years through its investment portfolio, some employees have interpreted the RFP clause as putting a ceiling on benefit payments that could lead to lowered employer contributions and payouts.

“They don’t say they’re going to modify the pension plan,” Chu reminded the audience. “My read of the proposal is that they want to keep things the same in the transition, but they reserve the right to modify it in the future. Part of my job is to help the DOE appreciate the risks of reducing the benefits plan for employees and retirees of the National Labs. The good news is that I believe they are beginning to fully appreciate the unintended consequences of the RFP. ”

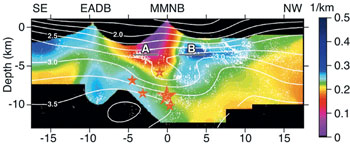

An Inside Look at the San Andreas Fault

Berkeley Lab scientists have developed a better way to eavesdrop on one of California’s crankiest and least understood inhabitants. They’ve constructed CT scan-like images of the San Andreas Fault that reveal nucleation zones of past quakes and areas where stress continues to build — at a resolution that is 10 times greater than other images.

“Our high-resolution images could allow us to watch stress evolve deep within faults like never before,” says Valeri Korneev of the Earth Sciences Divisions, who developed the imaging method with fellow ESD scientists Robert Nadeau and the renowned seismologist Thomas McEvilly, who died in 2002.

In this tomographic reconstruction, small red stars indicate the hypocenters

of earthquakes that are greater than magnitude 4, and the large star

indicates the hyocenter of the 1966 magnitude 6 quake.

In this tomographic reconstruction, small red stars indicate the hypocenters

of earthquakes that are greater than magnitude 4, and the large star

indicates the hyocenter of the 1966 magnitude 6 quake.

Their new technique is among the bonanza of earthquake research emerging from the small town of Parkfield, Calif., also known as the seismology capital of the world. Here, where the San Andreas Fault cuts through central California, a magnitude 6 earthquake has occurred on average every 22 years for about the last 100 years. (The last one struck several years late, rattling the town on Sept. 28, 2004 after a 38-year lull). In addition, hundreds of tiny earthquakes shake the fault yearly. To learn more about these quakes, the U.S. Geological Survey has placed a network of instruments near the town to record every seismic event.

After sifting through data relating to thousands of Parfkfield micro-earthquakes dating back to 1987, Korneev and his colleagues became interested in a type of low-velocity wave that is detected at the tail end of S and P waves — the strong, far-reaching waves that shake buildings and enable seismologists to determine the strength and epicenter of quakes. They determined these slower waves are fault-zone guided waves, meaning they’re trapped within the fault’s weak and fractured rock as they travel, much like the way sound waves are trapped within the brass piping of a trumpet.

Korneev had a hunch that because these waves are locked inside the fault during their journey from a quake’s center, they could offer an unparalleled window into the fault’s structure. Until now, seismologists used S and P waves to paint a picture of a fault’s makeup. But these waves often travel miles from the fault through bedrock and sediment before they’re detected, which clouds any information they might contain about the fault’s structure. Perhaps guided waves offered a better way.

“We are interested in what happens in the narrow zone of the fault where earthquakes originate,” says Korneev. “And guided waves are trapped inside the fault, precisely where we are trying to find structural information.”

To test their theory, the team obtained guided wave data collected at two Parkfield borehole monitoring stations, and turned to Berkeley Lab’s Center for Computational Seismology, which serves as the core data processing, computation and visualization facility for seismology-related research at Berkeley Lab. Using computer algorithms similar to those that transform X-rays into CT scans of the brain or heart, they developed a two-dimensional image of the San Andreas Fault that portrays a 35-kilometer stretch of the fault, plunging from the Earth’s surface to 10 kilometers underground, at a resolution of about 500 meters.

The image has helped them identify a zone that could mark where the creeping part of the fault abuts the locked part of the fault, an area that may correlate to the hypocenters of four earthquakes of greater than magnitude 4 that shook Parkfield in the past century.

“With images like these, the next logical step is to use guided waves to actively image a fault and track stress as it accumulates,” says Korneev.

Power Interruptions Cost U.S. $79 Billion Annually

A study conducted by Lab researchers Kristina Hamachi-LaCommare and Joe Eto estimates that electric power outages and blackouts — which can last from seconds to days — cost the U.S. about $80 billion annually. The study was conducted for the U.S. Department of Energy’s Office of Electric Transmission and Distribution.

“The big blackout that hit the northeast in the summer of 2003 really focused attention on the state of the electric power grid,” says Hamachi-LaCommare. “After the blackout, there were calls for investments to modernize the grid, which ranged from $50 to 100 billion. We wanted to add a key missing piece of information to these discussions, namely, the value these investments might bring in the form of improved reliability or fewer or shorter power interruptions.”

The study aggregates data from three sources: surveys on the value electricity customers place on uninterrupted service, information recor-ded by electric utilities on power interruptions, and information from the U.S. Energy Information Administration on the number, location and type of U.S. electricity customers.

Of the $80 billion estimate, $57 billion (73 percent) are losses in the commercial sector and $20 billion (25 percent) in the industrial sector. The authors estimate residential losses at only $1.5 billion, or about 2 percent of the total. Yet, Eto notes “It is difficult to put a dollar value on the inconvenience or hassle associated with power interruptions affecting residential electricity customers. “In addition, our method did not take into account the effects on residential customers of extended outages lasting many hours or days, since these are very rare occurrences … A problem with the data is that some utilities, by convention, do not include outages caused by major natural events, such as hurricanes or ice storms, in their statistics.”

A surprising conclusion of the study is that momentary interruptions, which are more frequent, have a bigger impact on the total cost of interruptions than sustained interruptions. Momentary interruptions were responsible for two-thirds of the cost, or $52 billion. “This finding underscores that fact that, for many commercial and industrial customers, it is the length of the down-time resulting from a loss of power that determines the cost of interruption, not necessarily the length of interruption itself,” Eto says.

The researchers caution that there are uncertainties in the data available, which means that the true costs could be higher or lower by tens of billions of dollars. To improve the accuracy of the data, Hamachi-LaCommare and Eto recommend that the utility industry and its regulators improve the collection of data on power interruptions and power quality, and that policy-makers, regulators, and industry coordinate their collection of data.

“Given the high stakes involved in decisions regarding who should invest how much to improve the grid, it is imperative that we rely on the best possible information on one of the key expected benefits from these investments, namely improvements in electricity reliability,” concludes Eto.

NCEM Microscope Helps Identify the Makeup of Star Dust

It’s been the subject of music, movies and poetry, but is has also been the source of a mystery that has vexed astronomers for more than 40 years. The “it” is star dust, interstellar grains that measure about one-hundredth the diameter of a human hair. And the mystery is a “bump” in the ultraviolet spectra at about 2175 angstroms (Å), the equivalent of 5.7 electron volts (eV) in energy. This spectral bump is the strongest feature observed in the visible-ultraviolet wavelength range along most galactic lines of sight.

Scientists have speculated that the carrier of this spectral signal could be fullerenes, nano-diamonds, or even interstellar organisms. Now, thanks to the unique capabilities at Berkeley Lab’s National Center for Electron Microscopy (NCEM), the truth is known.

Interstellar space is filled with a glowing dust — the debris

left over from stars that exploded.

Interstellar space is filled with a glowing dust — the debris

left over from stars that exploded.

Using NCEM’s newest transmission electron microscope (TEM), which is equipped with a monochromator and high-resolution electron energy-loss spectrometer, a multi-institutional collaboration has determined that the spectral signature of star dust comes from the combination of organic carbon and amorphous silicates (glass with embedded metals and sulfides), two of the most common materials in interstellar space.

“Our monochromated TEM (the F20 UT Tecnai) is the only instrument in the U.S. that could be used to image the 5.7 eV energy feature of individual star dust particles and identify the source,” says Nigel Browning, an NCEM staff scientist who represented Berkeley Lab in the collaboration. “People have been looking up into space and seeing this 5.7 eV feature in star dust for the past 40 years and arguing about the nature of the signal carrier. Our results should help resolve the debate.”

This research was led by John Bradley of the Institute of Geo-physics and Planetary Physics at Lawrence Livermore National Laboratory. Other collaborators were LLNL’s Zu Rong Dai, Giles Graham, Peter Weber, Julie Smith, Ian Hutcheon, Hope Ishii and Sasa Bajt, plus Rolf Erni of UC Davis, Christine Floss and Frank Stadermann of Washington University in St. Louis, and Scott Sandford of the NASA-Ames Research Center. Their results were published in the Jan. 14, 2005 issue of Science.

The universe is a dusty place, its galaxies filled with the detritus of all the stars that have ever exploded. According to the U.S. Geological Survey, each year more than a 1,000 tons of star dust falls to Earth, embedded within the interplanetary dust particles (IDPs) that are the remains of our solar system from its primitive stage.

Nigel Browning is pictured at NCEM’s Transmission Electron Microscope,

which was used to identify the source of a mysterious 2175 Å

spectral bump in star dust.

IDPs, collected in the stratosphere using NASA ER2 aircraft, have been the subject of intense studies led by LLNL’s Bradley over the past several years. To help resolve the question of the 5.7 eV spectral feature, he enlisted the aid of Browning and NCEM’s F20 UT Tecnai microscope.

“The 200 kilovolt monochromated F20 UT Tecnai is an instrument designed to produce the optimum high resolution performance in both transmission electron microscopy and scanning transmission electron microscopy,” says Browning. “In addition, the incorporation of a monochromator into the gun permits electron energy loss spectroscopy to be performed at a very high resolution.”

The NCEM microscope’s unique capabilities enabled the collaboration to use a technique called valence electron energy-loss spectroscopy (VEELS), which is ideally suited for studying the 5.7 eV feature of star dust. Their VEELS spectrums strongly implicated carbonyl-containing molecules and hydroxylated amorphous silicates as the signal carriers.

Said project leader Bradley, “While we cannot say that organic carbon and amorphous silicates are the only carriers of the astronomical feature, these molecules are abundant in interstellar space, and cosmically abundant carriers are needed to explain the ubiquity of the 2175 Å feature.”

In their Science paper, the collaboration team speculates that the carbon and silicate grains were produced by irradiation of dust in the interstellar medium. Their measurements, they say, could help explain how interstellar organic matter was incorporated into the solar system, and provides new information for computational modeling, laboratory synthesis of similar grains and laboratory ultraviolet photo-absorption measurements.

Lab Committed to Maintaining Much-Needed Library Journals

Imagine trying to drive to Sacramento without gasoline. You wouldn’t get very far. Similarly, Lab scientists say that Library journals are the fuel that energizes scientific research. While escalating subscription costs and a constrained overhead budget have threatened to cut off this intellectual fuel supply, Lab scientists and management realize the value of these resources to the Lab mission and are committed to maintaining a steady supply.

“The journals provide the base upon which we build our new results,” said Michel Van Hove, a scientist in the Materials Sciences Division and chair of the Lab’s Computing and Communications Services Advisory Committee (CSAC). “Without that base, we can’t do research. This is fundamentally different from many other services: we are unable to do research without full access to the literature, while most other services facilitate research to a degree that can be adjusted.”

With the support of Lab Director Steve Chu, CSAC and the Information Technologies and Services Division (ITSD) are working together to ensure that a high level of journal subscriptions will be restored. Previously, the LBNL library faced a potential cut of $500,000 from the FY 2004 level of funding.

Pictured at the Lab Library is the CSAC Library Group. Left to right

are committee members Shaheen Tonse, Jane Tierney, Michel Van Hove

(the group's chair), Mary Clary, and Corwin Booth.

Pictured at the Lab Library is the CSAC Library Group. Left to right

are committee members Shaheen Tonse, Jane Tierney, Michel Van Hove

(the group's chair), Mary Clary, and Corwin Booth.

To determine the amount of funding needed, a list of essential journals must first be created. The CSAC Library Group and ITSD is surveying Lab scientists on the importance of the individual journal titles, asking them to cast their votes for their favorite ones. The goal is to guarantee that the breadth of journal titles will be well represented in the final journal acquisition list. The survey is available at http://isswprod.lbl.gov/survey/journal/login.asp and will close on Monday, at 8 a.m.

“We’ll also be looking at utilization and utility to make appropriate subscription decisions, including usage statistics of most frequently visited online journals, survey results and scientists’ forecasted journal needs,” said Sandy Merola, director of ITSD.

But Library journals are essentially a moving target, and their price keeps rising. One of the fastest moving scholarly publishers has been the European conglomerate, Elsevier, which had a profit margin of 30 percent in 2003, according to the Washington Post.

“We have a list of journal titles that we subscribe to, and each month we get notices that many have been purchased by Elsevier, making independent pricing negotiations with each of these journals obsolete,” said Jane Tierney, acting head of the LBNL Library. “This forces us to consider them as part of a bundled package, similar to a cable TV package. Suddenly, we have to purchase journals we don’t have interest in. This drives overall costs up and limits our ability to acquire titles competitively.”

In this environment of constant change, a committee of scientists and engineers that represents the breadth of the Lab, along with CSAC representatives, is being created and will provide input for the restoration of journals and future decisions involving the Library.

A decision on which journals will be reinstated is expected the last week of January. For more information, contact Library head Jane Tierney.

FAQ About Library Journals

Why is the cost of journals increasing every year?

The inflation rate of journals, when measured across for-profit and non-profit publishers, is about 12 percent annually. Continued consolidation of publishers of scientific journals has led to complaints of price-gouging and limited distribution rights.

Why can’t we just access the journals through UC Berkeley?

Only individuals who have UC Berkeley appointments have a CalNet ID and can access the Berkeley Library system online. All staff (as UC employees) have access to the physical holdings and interlibrary loans of UC Berkeley by obtaining a library card.

Why do we still spend money on print journals?

Some publishers require that we subscribe to the print copy to obtain online access. As a result of a survey of Lab scientists last year, we utilized savings from canceling print copies to purchase Web of Science, a unique online citation database index. We would like to increase our access to Web of Science back files as well.

Why do we have the physical library?

Within the UC library system some of our physical holdings are unique. We partner with the UC system and other libraries worldwide to make these materials available to scientists by loan. Some materials are not available online yet, and may never be. Others cost thousands of dollars to access back files online, which we already own in print copy — we utilize the print copy in the library collection to save money. We need to manage the holdings and access them conveniently, keeping costs low and balancing space needs.

How has industry consolidation affected life sciences journals?

Since these disciplines do not have long-established non-profit member societies, which have fostered publishing in their fields (such as physics, chemistry and material sciences), the cost of life sciences journals has steadily risen. Elsevier, the largest scholarly publisher, has acquired many singular journals, such as Cell Biology, and this has led to a virtual monopoly on life science publishing — the largest growing portion of the scholarly journal niche.

Berkeley Lab View

Published every two weeks by the Communications Department for the employees and retirees of Berkeley Lab.

Reid Edwards, Public Affairs Department head

Ron Kolb, Communications Department head

EDITOR

Monica Friedlander, 495-2248, msfriedlander@lbl.gov

STAFF WRITERS

Lyn Hunter, 486-4698

Dan Krotz, 486-4019

Paul Preuss, 486-6249

Lynn Yarris, 486-5375

CONTRIBUTING WRITERS

Jon Bashor, 486-5849

Allan Chen, 486-4210

David Gilbert, 925-296-5643

FLEA MARKET

486-5771, fleamarket@lbl.gov

Design

Caitlin Youngquist, 486-4020

Creative Services Office

Communications Department

MS 65, One Cyclotron Road, Berkeley CA 94720

(510) 486-5771

Fax: (510) 486-6641

Berkeley Lab is managed by the University of California for the U.S. Department of Energy.

Online Version

The full text and photographs of each edition of The View, as well as the Currents archive going back to 1994, are published online on the Berkeley Lab website under “Publications” in the A-Z Index. The site allows users to do searches of past articles.

Flea Market

- AUTOS & SUPPLIES

- ‘99 Ford Ranger, 72K mi, 4 cyl, 5 sp, tinted rear win, tool box, Line-X bed liner, diamond plate bed caps, new Goodyear tires, CD player, clean inside & out, $5,000, Scott, X4356, 220-5725

- ‘92 TOYOTA COROLLA DX, 4-dr sedan, auto, fwd, 145K, ac, pwr steering, am/fm/ cass, new radiator/battery & Michelin tires, used engine, clean title, exc cond, $2,500, Muthu, X6308, (925) 952-4220

- ‘90 HONDA ACCORD EX, 4 dr, dark blue, auto, 108K mi, ac, pwr drs/win, orig owner, all maint records, $2,400/bo, Chuck, X4461, 521-6368

- HOUSING

- BERKELEY, 1 bl from UCB, lge, nicely-furn 1 bdrm apt w/ computer & DSL, $1,575/mo incl all util, Jin, 845-5959, jin.young@juno.com

- BERKELEY, 2 bdrm/2 bth top flr apt in duplex, quiet neighborhd, nr shops/rest/BART/public trans & UC, completely remodeled w/ new appliances, hardwd, tiles, spacious loft & hallway, lots of sunlight, lge deck, incl w/d, water, garbage, $2,100/mo + util, $2,000 sec dep, no pets/smok, avail 3/1, Vlad or Linda, 849-1579, lmoroz@earthlink.net

- BERKELEY HILLS, by wk/mo, quiet furn suite 1 bdrm/1 bth, liv rm, kitchenette, quiet, elegant & spacious, bay views, DSL, cable, walk to UCB, Pierre, 387-4015, pchew@pacbell.net

- BERKELEY/OAKLAND BORDER, 3 bdrm/ 1 bth home, $1,750 rent + $1,750 sec, close to shopping/BART, completely remodeled kitchen & bthrm, all new appliances incl range, refrig, d/w, garbage disposal, w/d combo, lge backyard w/ space for offstr parking & gardening, avail 2/1, open house Jan 15 & 22, 1 pm to 4 pm, 960 63rd St, Delia, 638-3992

- EL CERRITO, 2 bdrm/1 bth newly updated 4 plex condo, double-paned insulating windows, lge bright liv room, priv front & back entry, carpet, self cleaning gas stove & lge refrig, no pets, nr El Cerrito Plaza BART, $1,175 incl 2 parking spaces, sec $1,200, Subh, 928-5117, Phyllis, 524-4849

- NORTH BERKELEY by wk/mo, fully furn, 1/1 flat, quiet, spacious, dish TV, laundry rm, priv garden, gated carport, walk to shuttle/UCB/pub trans, Geoff, 848-1830, gfchew@mindspring.com

- NORTH BERKELEY, rm in newly-renovated duplex, $525+util, avail Feb, no smok/drug/ alcohol, 1.5 bthrms, 10 min walk to BART, parking avail, 1 mo rent for deposit, male or female, no pets, live w/ 2 women late 20s, Erin, (415) 516-1059

- NORTH BERKELEY HILLS, house w/ bay view, 4 bdrm/2 bth, hardwd flrs, liv rm, dining rm, breakfast rm, kitchen w/appliances, w/d, enclosed sunroom off master bedrm w/multi-bridge view, water paid & gardening incl, avail now, $2,500/mo, Kenneth, 843-5253

- NORTH OAKLAND, quiet, cozy cottage w/ priv ent in backyard garden, cable-ready, carpet, lge closet, eat-in kitchen, nr pub trans/BART, no smok/ pets, 1-yr lease, $1,050/ mo incl all util, dep $2,100, ref & credit check req, 654-2863, sr_hd@gtcinternet.com

- MISC ITEMS FOR SALE

- STEREO SPEAKERS, new in box, never used, $75 for both, Teresa, X4270

- ROLLING WALKER, 3 whls, hand brakes, pouch, exc cond, $65; shower chair w/ back support, adj height, good cond, $35, Ron, X4410, 276-8079

- GENERATOR, Coleman 1850 watt, less than 50 hrs, Kawasaki engine, weighs ~ 65 lbs w/ handle, $375, Frank, X4552

- BOOKCASE, teak, drop-lid, 30”x16” x75”, $45, beige lacquer dresser,

- 62”x 29”x17”, $95, Sue, 595-7123

- WANTED

- SOCCER PLAYERS, for a co-ed team in San Ramon/Danville, games start in March & are Sunday mornings at 9 or 11, women must be at least 27 y.o. & men at least 30 y.o, Randy, X7026

- VACATION

- SOUTH LAKE TAHOE, spacious chalet in Tyrol area, close to Heavenly, fully furn, sleeps 8, sunny deck, pool & spa in club house, close to casinos & other attractions, $150/day + $100 one-time clean fee, Angela, X7712, Pat or Maria, 724-9450

- PARIS, FRANCE, nr Eiffel Tower, furn, eleg & sunny 2 bdrm/1 bth apt, avail yr round by week/mo, close to food stores/rest/pub trans/shops, 848-1830

- NORTH LAKE TAHOE house at Kings Beach, 3 bdrm/2 bth, sleeps 6, full kitchen, liv room & dining area w/ fireplace, quiet cul-de-sac, great neighborhd, 10 min from Northstar, no smok/pets, $150/night (2 nights min) + $95 cleaning fee, or $725/ week, Vlad or Linda, 207-1255

People, Awards and Honors

Bustamante

Bustamante

Bustamante, Commins receive teaching awards

Commins

Commins

Two Lab Berkeley physicists were honored with teaching awards by the American Association of Physics Teachers. Carlos Bustamante of Physical Biosciences won the Richtmyer Award for conveying physics to public audiences. Emeritus physics professor Eugene Commins, who was the graduate advisor for Lab Director Steve Chu, won the prestigious Oersted Medal for teaching. Commins is known for his hunt for the elusive “electric dipole moment of the electron.” Chu credits Commins for his qualities as a mentor and for often working side-by-side with his students late into the night.

Leemans

Leemans

The work of Berkeley Lab scientists in the Accelerator and Fusion

Research Division, under the leadership of Wim Leemans, has been honored

by Nature magazine as one of the top 10 research highlights for 2004.

The journal cites table-top accelerators, with the laser focusing

advances announced in September by Leemans’ team, as having

the potential to whittle down a particle accelerator “from the

size of a football stadium to the size of a lab.”

In Memoriam

Dorothy Denney

Dorothy Denney, a Lab librarian for nearly 40 years, died peacefully at home on December 16 after a long battle with cancer. She was 81.

Denney

Denney

Until her retirement from Berkeley Lab in 1989, Denney provided library support to the Department of Bio-physics and Medical Physics. “She contributed so cheerfully to the success of the work of all of us, and she did so with such tremendous commitment,” said Robert Glaeser, former chair of the library committee for the Donner Laboratory Library.

Born on July 6, 1923 near Lancaster, Ohio, Denney moved to Berkeley in 1948 after graduating from Michigan State University. She married Robert D. Denney in 1950 while working as a librarian at Donner Library. They built a house in Piedmont Pines, a few miles from the University, where she continued to live and work until her retirement.

She is survived by her husband, Robert Denney, children Karen, Janice and Richard, brother Charles, and sister Frances.

Donations in her memory may be sent to Sutter VNA & Hospice,

1900 Powell St., Suite 300, Emeryville, CA 94608 or to the American

Cancer Society, Attn: Web, P. O. Box 102454, Atlanta, GA 30368-2454.